Headlines (77 articles)

- We tried Google’s AI glasses and they’re almost there TechCrunch AI May 22, 2026 03:37 PM Google demoed prototype Android XR glasses that overlay Gemini-powered translation, navigation, and other information directly into your field of view.

- Even If You Hate AI, You Will Use Google AI Search Wired AI May 22, 2026 03:00 PM The search giant’s AI-crafted answers are so convenient, you’ll be sucked in—to the detriment of the web and the artists and thinkers behind it.

-

AI put "synthetic quotes" in his book. But this author wants to keep using it. Ars Technica AI May 22, 2026 02:05 PM 1 min read Steven Rosenbaum explains how inaccurate quotes got into his book The Future of Truth.

Journalist and author Steven Rosenbaum has more reasons than most to distrust AI.

His new book, The Future of Truth: How AI Reshapes Reality, is all about "how Truth is being bent, blurred, and synthesized" thanks to the "pressure of fast-moving, profit-driven AI." Yet a New York Times investigation this week found what Rosenbaum now acknowledges are "a handful of improperly attributed or synthetic quotes" linked to his use of AI tools while researching the book.

These quotes include one that tech reporter Kara Swisher told the Times she "never said" and another that Northeastern University professor Lisa Feldman Barrett said "don’t appear in [my] book, and they are also wrong." Rosenbaum is now working with editors on what he says is a full "citation audit" that will correct future editions.

-

The literary world isn’t prepared for AI The Verge AI May 22, 2026 10:30 AM 1 min read You know it when you see it.

Since 2012, the British literary magazine Granta has published the regional winners of the annual Commonwealth Short Story Prize. This year, however, there was something off about one of the selections for the prestigious award: It appears to have been written by AI.

Jamir Nazir's "The Serpent in the Grove" has many of the hallmarks of LLM-generated prose - mixed metaphors, anaphora, lists of threes. (I'm aware this, too, is a list of threes, and I promise I wrote this post myself, unassisted, as I write all things.) I'll admit I was initially unconvinced by the allegation that Nazir's story had been generated by AI. I know people are using …

-

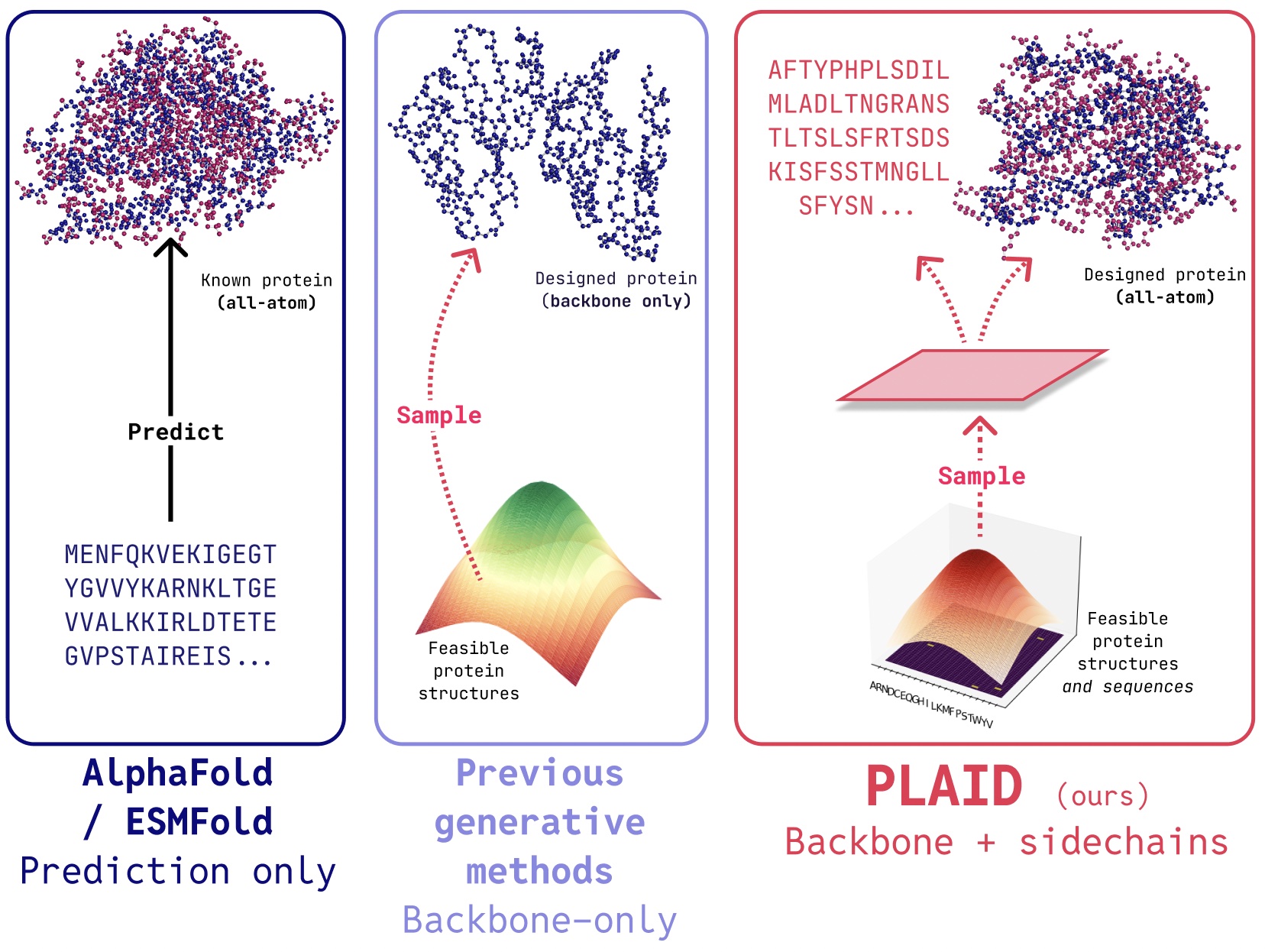

Google I/O showed how the path for AI-driven science is shifting MIT Technology Review May 22, 2026 10:00 AM 6 min read Two years ago, an AI tool won Google DeepMind a Nobel. Researchers are now climbing toward a new goal.

During Tuesday’s Google I/O keynote, Demis Hassabis, the CEO of Google DeepMind, proclaimed that we are currently “standing in the foothills of the singularity.” It was a striking statement—the singularity is the theoretical future moment when AI rapidly exceeds human intelligence and dramatically transforms the world. But what struck me as I listened in the audience was the context in which he said those words.

He was on stage to close out the session with a segment on scientific AI, the centerpiece of which was a video detailing how the company’s weather prediction software provided an advance alert about Hurricane Melissa’s catastrophic landfall in Jamaica last year—and potentially saved lives. If that software, called WeatherNext, helped anyone escape the storm or better fortify their home, that’s an enormous and meaningful achievement. But it’s hardly evidence of an impending singularity.

The juxtaposition of Hassabis’ lofty rhetoric with the real-world results of WeatherNext highlighted the tension between two very different approaches to AI for science. The first focuses on AI tools, like WeatherNext, that are designed and trained to solve specific scientific problems. The second is agentic, LLM-based systems that could one day execute cutting-edge research projects without human involvement.

This second vision powers a great deal of AI enthusiasm right now, including recent excitement around recursive self-improvement, or the idea that AI systems could eventually become the primary drivers of AI advancement—a process that would get faster and faster as the AI systems grow smarter. And agentic systems are now making real research contributions, sometimes with limited human guidance.

Just this week, Pushmeet Kohli, Google Cloud’s chief scientist, published a piece in a special AI and science issue of the journal Daedalus, writing: “We are moving toward AI that doesn’t just facilitate science but begins to do science.” With autonomous AI scientists on the horizon, it’s harder to justify massive efforts to develop super-specialized tools—even one like AlphaFold, for which DeepMind scientists won a Nobel Prize, or a potentially life-saving system like WeatherNext. It also heralds a far stranger future for science, in which humans and AI systems collaborate as peers—or AI even makes scientific progress on its own.

To be clear, Google does not appear to be abandoning its work on specialized AI for science tools. AlphaGenome and AlphaEarth Foundations, which are trained for genetics and Earth science applications respectively, were released last summer, and the newest version of WeatherNext came out in November.

What’s more, such tools remain extremely popular among scientists. Last year, for instance, Google reported that protein structure predictions from AlphaFold have been used by over three million researchers worldwide. And Isomorphic Labs, a Google subsidiary that aims to use AlphaFold and related technologies to develop new drugs, just raised a $2 billion Series B funding round.

But there are concrete signs of realignment, in both enthusiasm and resources. Last month, the Los Angeles Times reported that Google fellow John Jumper, who won the Nobel for AlphaFold, is now working on AI coding, not on science-specific AI tools. It’s not surprising that Google is assigning its best minds to the coding problem, as the company has recently taken a reputational hit because its coding tools don’t currently stand up to those offered by Anthropic and OpenAI. But it may also signal a prioritization of agentic science on Google’s part, as coding abilities are key to the success of some of those systems.

Across the industry, agentic researcher systems are showing real potential. This week, OpenAI announced that one of their models had disproved an important mathematics conjecture—perhaps the most meaningful contribution that generative AI has made to mathematics so far, according to some mathematicians.

Importantly, the model used by OpenAI is not specialized for solving mathematical problems, or even for research; according to the company, it’s a general-purpose reasoning model in the vein of GPT-5.5. If general agents can make independent contributions to mathematical research, they might soon be able to do the same in science (though the fact that ideas in science must be verified experimentally makes it a tougher domain for AI).

Google is certainly devoting a lot of attention toward an agent-driven scientific future. The big scientific announcement at I/O was the new Gemini for Science package, which unites several of the company’s LLM-based scientific systems under one brand.

This includes the hypothesis-generating AI Co-Scientist and algorithm-optimizing AlphaEvolve, which are still not publicly available—but as Google is now allowing any researcher to apply for access to Gemini for Science, they may soon see wider adoption in the scientific community. Scientists who were involved in early testing are enthusiastic about their potential: Gary Peltz, a Stanford geneticist, compared using the AI Co-Scientist to “consulting the oracle of Delphi” in a Nature Medicine article.

Gemini for Science isn’t incompatible with specialized tools; to the contrary, agentic systems can be designed to call on such tools when they might be useful. And no agentic system can predict the structure that a protein will fold into without AlphaFold’s help (at least not yet). But the company seems to be shifting its public image—and at least some resources and personnel, such as Jumper—away from specifically developing those kinds of tools. Though it has only been five years since AlphaFold solved the protein-folding problem, both the technology and the discourse have quickly moved beyond that once-revolutionary achievement.

Google has been careful to position this new set of scientific agents as an accelerant for human scientists, rather than a replacement for them—the choice of the name AI Co-Scientist as opposed to AI Scientist, for instance, appears quite deliberate. Hassabis uses that same human-centric framing when he talks about changes in the landscape of scientific AI. “For the next decade or so, we should think about AI as this amazing tool to help scientists,” Hassabis said in an interview published in the Daedalus issue. “Beyond that timeframe, it is hard to say with any certainty, but perhaps these systems will become more like collaborators.”

But no one can be an effective scientific collaborator without also being a skilled scientist in their own right. And if Hassabis is anywhere near the mark when he talks about the “foothills of the singularity,” then AI scientists could eventually exceed the capabilities of their human counterparts.

In a discussion with the journalist Mike Allen at I/O, Hassabis spoke of how he was initially inspired to pursue AI when he observed how progress in physics had stagnated since the 1970s; he wondered whether the human mind had reached its limits in that domain, and if AI could help to overcome that barrier. Superhuman agentic scientists would certainly fit that bill. We might not ever get anywhere near there, but Google seems to be aiming itself toward that summit.

-

Why would you disrespect your favorite artist with an AI remix? The Verge AI May 22, 2026 10:20 AM 1 min read What superfan wants this?

Prompt something better than Beyoncé’s “Break My Soul,” I dare you. | Image: Cath Virginia / The Verge | Photo from Getty Images AI covers and remixes of songs are already a blight on the internet. Spotify, YouTube, TikTok, and Instagram are awash in flat reggae versions of "Smells Like Teen Spirit," dinky country renditions of The Weeknd, and monotonous Motown reimaginings of AC/DC. Now, a new tool from Spotify will make them even easier to generate and share.

Spotify and Universal Music Group (UMG) signed a licensing deal that will allow users to generate remixes and covers from UMG's catalog. How exactly it will work, beyond being "powered by generative AI technology," or how much it will cost, is unclear. They're positioning this as a premium subscription add-on …

- The Gulf’s AI Boom Has an Undersea Cable Problem Wired AI May 22, 2026 09:00 AM Hyperscalers are pushing the Gulf to rethink internet infrastructure as AI raises the stakes of cable disruptions.

-

Samsung’s memory chip employees negotiated $340,000 bonuses this year The Verge AI May 22, 2026 07:05 AM 1 min read But the deal may still be a win for Samsung.

48,000 Samsung workers had threatened to strike unless bonus caps were lifted. | Photo: Jung Yeon-je / AFP via Getty Images Details have emerged about a tentative deal struck between Samsung and semiconductor employees who had threatened to strike. The deal reportedly makes some workers eligible for average annual bonuses of $340,000.

The proposed 18-day strike had hinged on Samsung's bonus cap for employees in the semiconductor division and followed a substantial rise in the possible bonuses available to employees of SK Hynix, another South Korean chipmaker enjoying a boom thanks to demand for AI components.

Under the terms of the new deal, Reuters reports that all chip workers will receive 50 percent of their annual salary as a regular bonus in cash. Further …

-

Roundtables: Can AI Learn to Understand the World? MIT Technology Review May 21, 2026 08:41 PM 1 min read Watch a subscriber-only discussion exploring how AI might enter the physical world.

Listen to the session or watch below

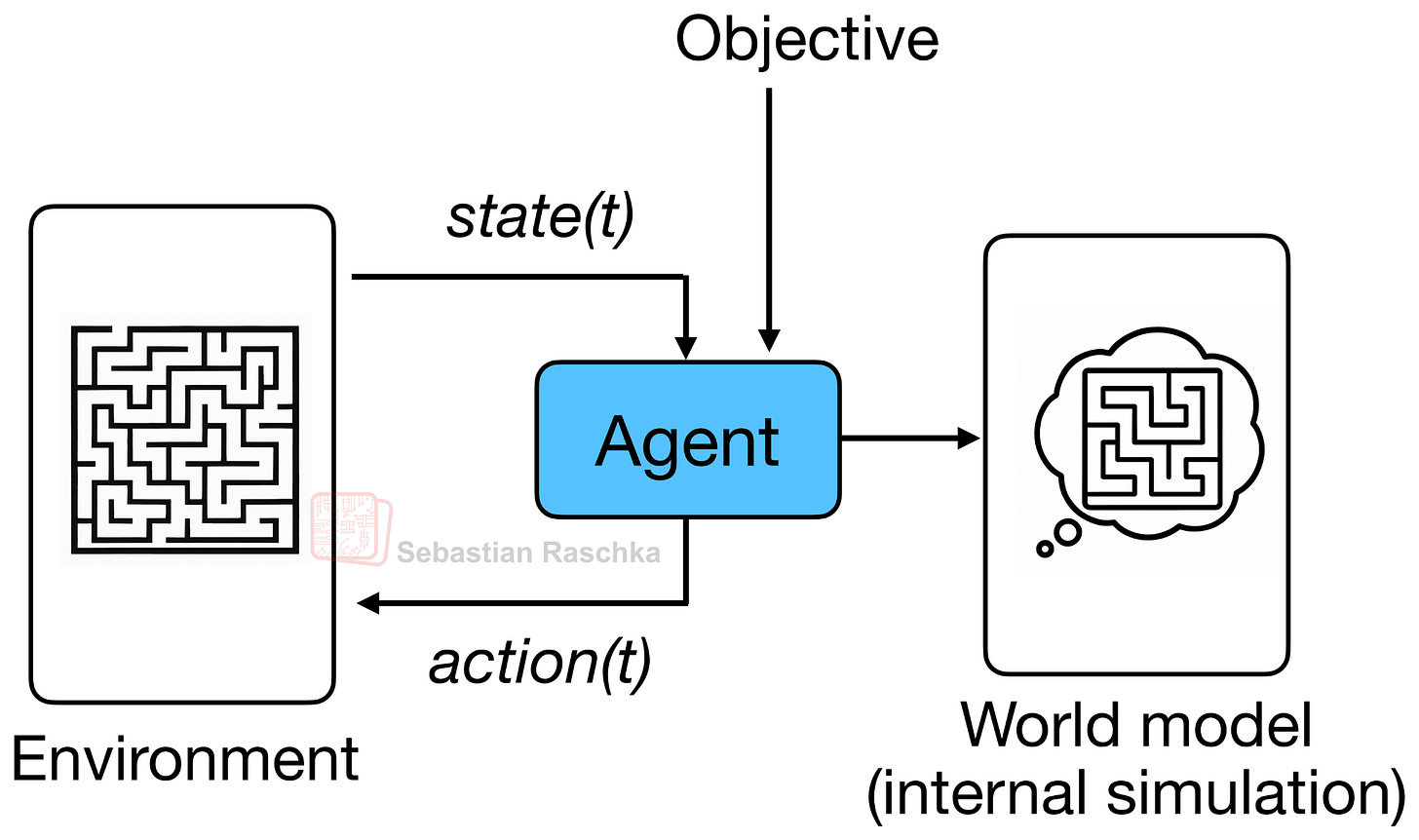

AI companies want to build systems that understand the external world and overcome the limitations of LLMs. Recent developments have brought world models to the forefront of the AI discussion.

Watch a conversation with editor in chief Mat Honan, senior AI editor Will Douglas Heaven, and AI reporter Grace Huckins exploring how AI might enter the physical world.

Speakers: Mat Honan, Editor in Chief, Will Douglas Heaven, AI Senior Editor, and Grace Huckins, AI Reporter

Recorded on May 21, 2026

Related Stories:

- Spotify and Universal Music strike deal allowing fan-made AI covers and remixes TechCrunch AI May 21, 2026 07:45 PM Spotify is partnering with Universal Music Group to let Premium subscribers create AI-generated song covers and remixes, with participating artists receiving a share of the revenue.

- Can OpenAI’s ‘Master of Disaster’ Fix AI’s Reputation Crisis? Wired AI May 22, 2026 12:04 AM Global affairs chief Chris Lehane wants to tone down the debate over AI’s societal impacts—and get states to pass laws that won’t derail OpenAI’s meteoric rise.

- Six search engines worth trying now that Google isn’t really Google anymore TechCrunch AI May 21, 2026 07:19 PM Google is about to look really different, and if you're not a fan of the AI overview feature, then you're not going to like what's coming.

-

Scaling creativity in the age of AI MIT Technology Review May 21, 2026 07:16 PM 6 min read Building customer trust with on-brand content production has become a strategic imperative.

Storytelling is core to humanity’s DNA, stemming from our impulse to express ideals, warnings, hopes, and experiences. Technology has always been woven through the medium and the distribution: from early humans’ innovation of natural pigments and charcoals for cave paintings to literal representation by the camera.

The landscape of storytelling continues to shift under our feet. Social and streaming platforms have multiplied, audiences have fragmented, and our demand for fresh, unique media is insatiable. A recent McKinsey podcast cites that we are watching upwards of 12 hours of video content daily, often on multiple devices and multiple platforms.

All this content is expensive to produce: With a baseline budget of $150M, a Hollywood feature runs $1M per minute of finished film; prestige streaming content is in the hundreds of thousands per minute. And since consumers want to engage with authentic, original material, every company is now effectively a media company. That means we all face the same pressure: more content, with the same time and budget constraints.

There is no longer a question whether to use AI for content; the math doesn’t work any other way. What leaders need to focus on now is how to adapt responsibly, protect brand integrity, uplift team creativity, and build customer trust.

A few things worth holding onto as this era accelerates:

- AI amplifies what’s already there, both good and bad. Weak strategy stays weak.

- Responsible adoption means knowing what’s in your tools and models. Provenance and transparency are the foundation, not the finish line.

- Scale without taste is just noise. Investing in your team’s judgment is what makes more content matter.

- Fundamentals of great storytelling have not changed. Regardless of format or channel, what makes audiences lean in are still characters, arc, ingenuity, and surprise.

The permanent sprint

Creative teams are trapped on the endless hamster wheel of production, and it’s not slowing down. According to Adobe research, content demand will grow 5x over the next two years. Social content shelf life is now measured in hours, not weeks. Keeping fresh work in the pipeline is a permanent sprint, requiring teams to rethink how creative production functions.

The first move is freeing creative teams by having AI absorb the repetitive work so they have space for the strategic creative decisions that require human ingenuity. In a recent study from Adobe, 94% of creatives report that AI helps them produce content faster, saving an average of 17 hours per week. That recovered time is not a productivity metric; it is renewed creative capacity.

As a use case, Nestlé offers a useful blueprint. Its teams operate across 180 countries with a portfolio of iconic brands including Nescafé, KitKat, and Purina. Using Adobe Firefly Custom Models embedded in existing content workflows allows teams to generate assets in a brand-informed style without disrupting creative flow. At Nestlé, workflow cycle times dropped 50%. “With Firefly Custom Models, we can react at the speed of culture. It’s the closest thing we’ve had to magic.” says Wael Jabi, global strategic comms lead for KitKat.

As we move into the agentic era, the possibilities expand further. Adobe’s Creative Agent thinks in systems, not tasks, orchestrating across workflows, apps, and processes to close the gap between idea and execution, and get teams out of the production cycles that consume their productivity.

Build for your brand, not every brand

A company’s brand is how the world recognizes and connects with them. And it’s more than a collection of assets—it is dynamic, subjective, and expressed in thousands of micro-decisions made every day by the people who know it best. As production scales, keeping everything tuned to the brand gets more challenging. Off-the-shelf AI cannot replicate the level of nuance creative teams bring to content, and there’s a real cost to getting it wrong; diluting a brand in market with almost-right output is not an acceptable option. Customer trust is fragile.

Starting with a bespoke AI model built with Adobe Firefly Foundry addresses this directly. Firefly Foundry starts with a commercially safe base model and trains further on a company’s IP, making it possible to produce content that genuinely reflects the team’s vision.

And to ensure that Firefly Foundry models truly represent the creatives at the helm, Adobe has partnered with film studios like Wonder Studios, Promise.ai, and B5 Studios, and the “big three” talent agencies CAA, UTA, and WME to deeply understand what it means (and what it takes) to build an IP-immersive model that keeps creatives at the center as these film studios and talent agencies scale their visions. These brand ecosystems can accelerate nearly every phase of the production process, from ideation and storyboarding to production and promotion, all while preserving artistry and authorship. And to power the next generation of creativity and content, Adobe has recently announced a strategic partnership with NVIDIA, delivering best-in-class creative control along with enterprise-grade, commercially safe content at scale.

Generic AI gives teams a starting point. But a model trained on a brand’s own IP gets them to the finish line, while still leaving room for the creative calls that matter most.

When agents become the audience

AI is not only reshaping how we create; it is reshaping how customers find and engage with brands entirely. According to Adobe Digital Insights, AI-powered shopping has surged 4,700%. Agentic web traffic is up 7,851% year over year. Yet, most businesses still have significant gaps in AI-led brand visibility. If content is invisible to AI agents, then a brand is invisible to customers.

Major League Baseball is ahead of this curve. Using Adobe LLM Optimizer, the league monitors how its content surfaces across AI interfaces and makes real-time adjustments to maintain visibility. As fans search for tickets, stats, or game-day experiences, the league ensures its brand shows up wherever that search is happening. And with Adobe’s recent acquisition of Semrush, brand visibility goes even further.

The agentic web created an entirely new content surface that did not exist two years ago, and this exponential proliferation of content illustrates precisely why scaled, on-brand content production has become a strategic imperative. A well-built agentic foundation offers full visibility into (and control over) every piece of content, from production to performance.

How to prepare for AI integration

Here are a few steps to get started:

Audit before automation. Content supply chains usually include duplicated processes, unclear ownership, and assets living in many different places. Before AI can accelerate anything, develop a clear map of how content moves through the organization today: who creates it, who approves it, where it lives, and where it breaks down. AI applied to a broken process just breaks it faster.

Walk through workflows. Resist the urge to overhaul everything at once. Start with production tasks that are high-volume, low-stakes, and well-defined: asset resizing, localization, and background generation. Use those wins to build internal confidence before expanding into more complex creative territory.

Build responsible governance from the start. Governance added as an afterthought becomes a bottleneck. Building it in from the beginning creates a competitive advantage that lets teams move fast with confidence. And this means clear policies on model training, content provenance, human review thresholds, and communicating AI use to customers. The brands that earn lasting trust will treat transparency as a feature, not a footnote.

This content was produced by Adobe. It was not written by MIT Technology Review’s editorial staff.

- Meta Is in Crisis, Google Search’s Makeover, and AI Gets Booed by Graduates Wired AI May 21, 2026 08:44 PM In this episode of “Uncanny Valley,” we unpack the mass layoffs at Meta, big announcements at Google I/O, and the latest backlash against AI.

- Trump delays AI security executive order, saying language ‘could have been a blocker’ TechCrunch AI May 21, 2026 05:30 PM President Trump delayed signing an executive order that would have required pre-release government security reviews of AI models, citing dissatisfaction with the order's language.

-

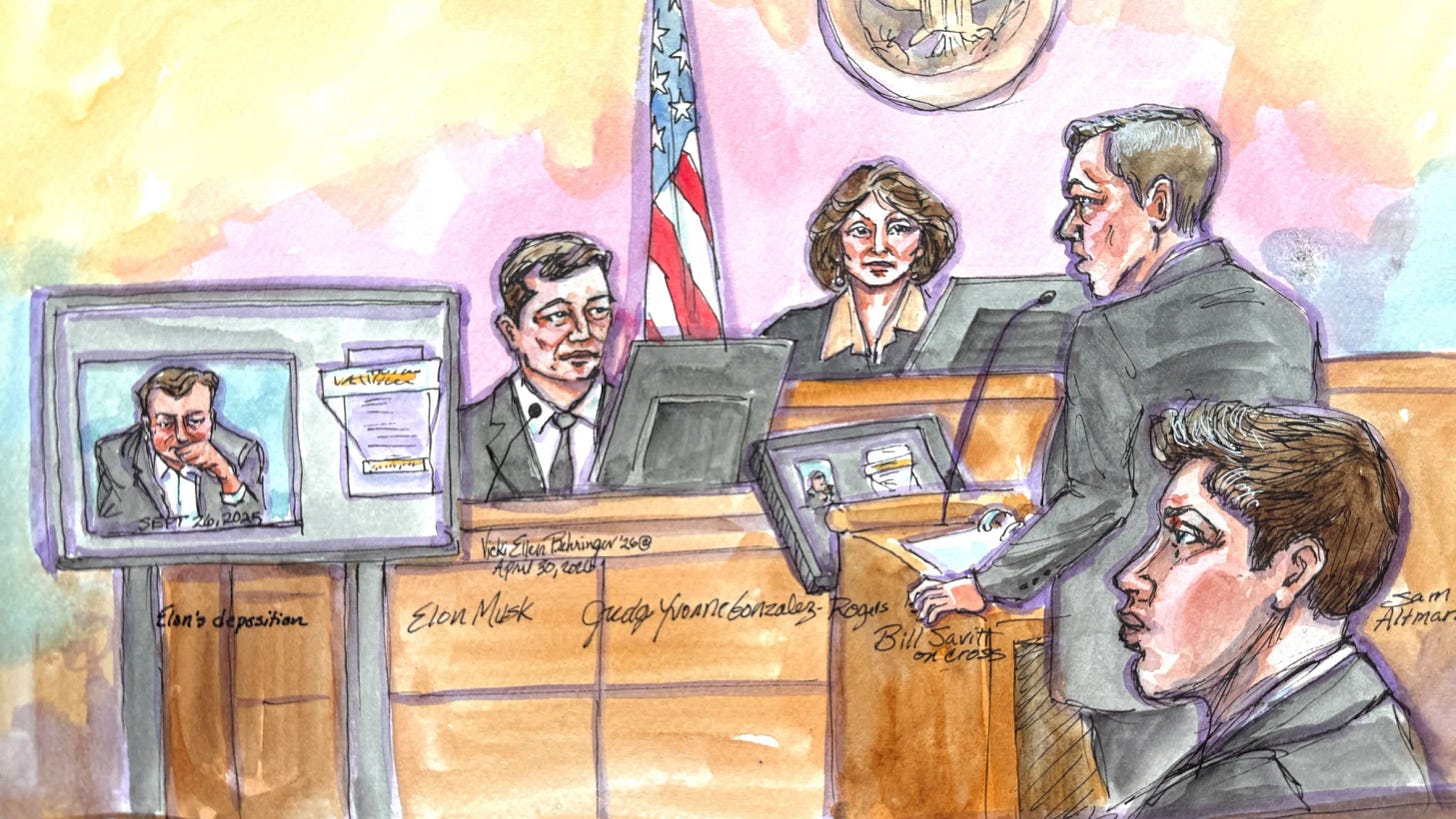

All of the updates from Elon Musk and Sam Altman’s battle over OpenAI The Verge AI May 21, 2026 04:15 PM 20 min read

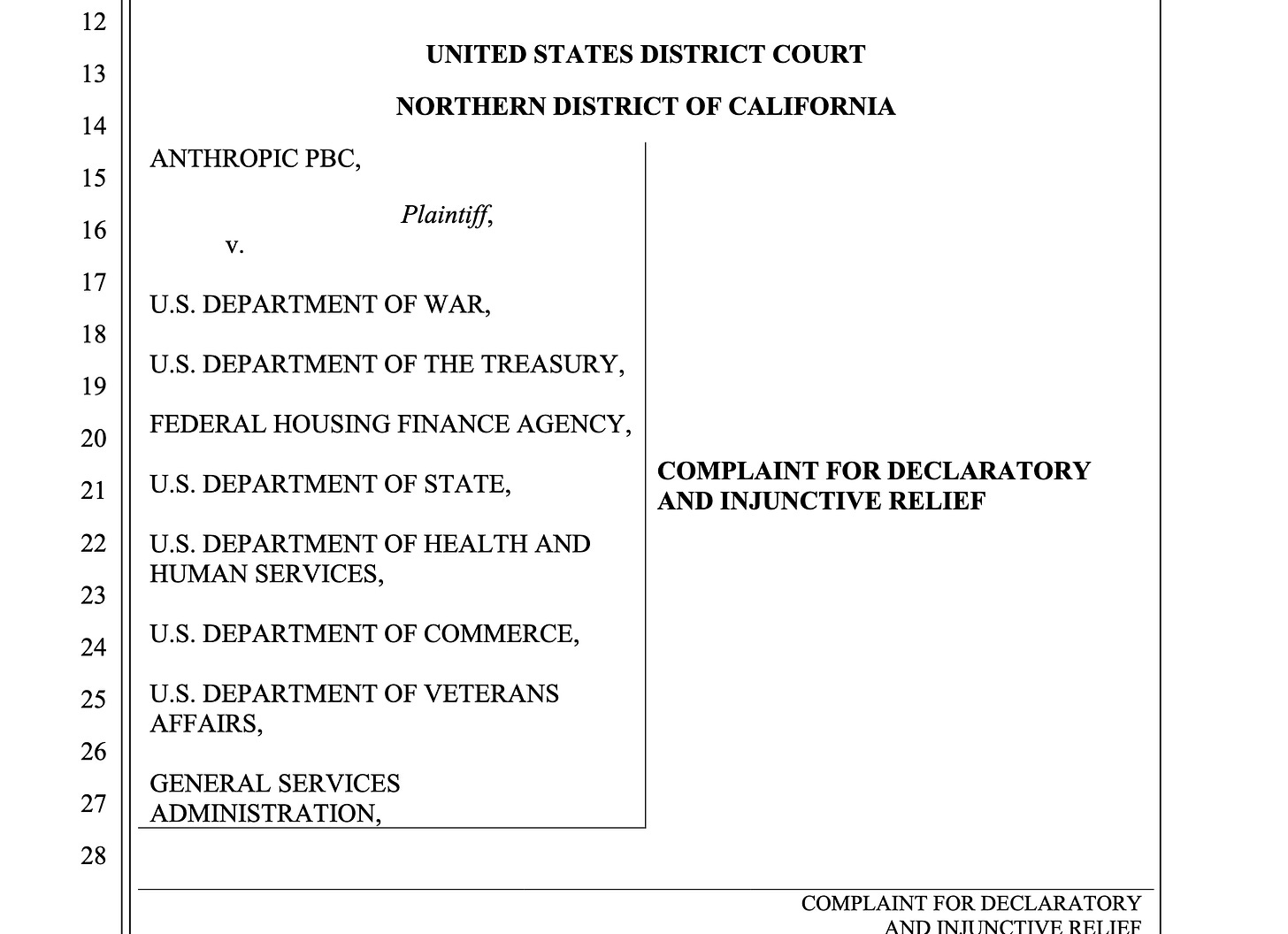

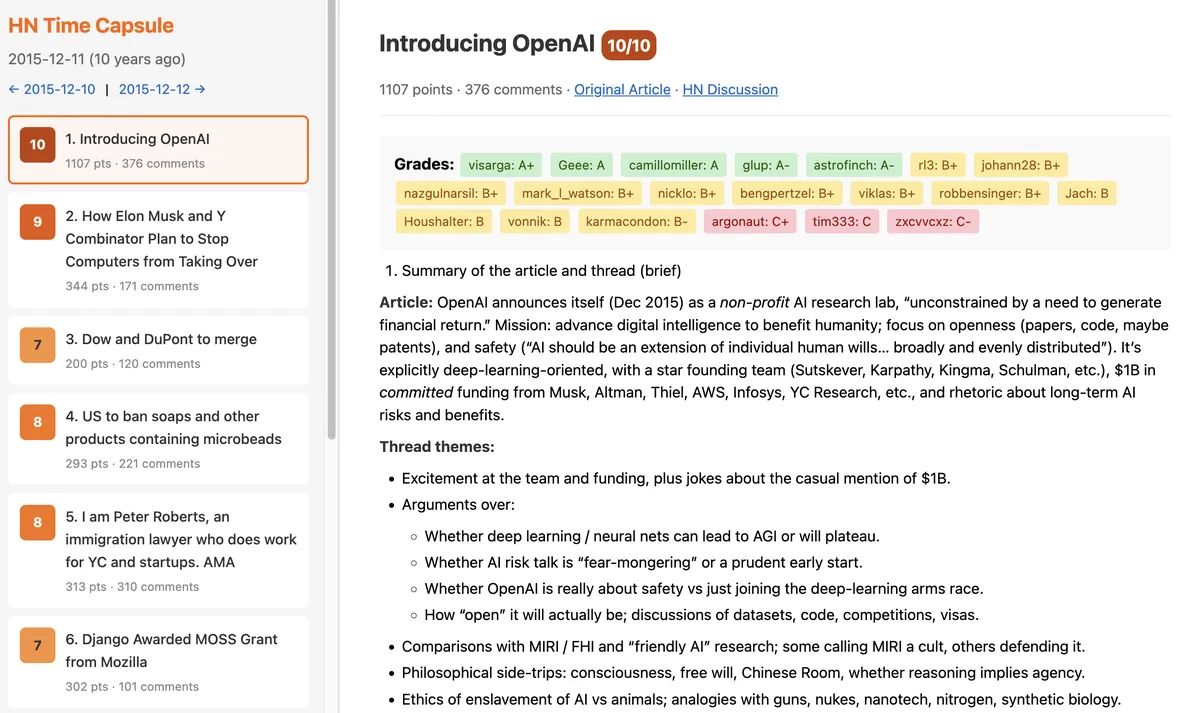

Sam Altman and Elon Musk are facing off in a high-stakes trial that could alter the future of OpenAI and its most well-known product, ChatGPT. In 2024, Musk filed a lawsuit accusing OpenAI of abandoning its founding mission of developing AI to benefit humanity and shifting focus to boosting profits instead.

After nearly a month, with the trial featuring testimony from Musk, Altman, Microsoft CEO Satya Nadella, OpenAI cofounder Greg Brockman, former OpenAI board member and mother of several of Musk’s children Shivon Zilis, and a few others, the jury deliberated for a couple of hours before returning to the “room full of untrustworthy, unreliable people all fighting with each other” with a verdict, deciding to dismiss all charges due to the statute of limitations.

Musk was a cofounder of OpenAI and claims that Altman and Brockman tricked him into giving the company money, only to turn their backs on their original goal. However, OpenAI claimed that “This lawsuit has always been a baseless and jealous bid to derail a competitor” in a bid to boost Musk’s own SpaceX / xAI / X companies that have launched Grok as a competitor to ChatGPT.

In his lawsuit, Musk asked for the removal of Altman and Brockman, and for OpenAI to stop operating as a public benefit corporation.

People to Know

Plaintiff

Elon Musk — plaintiff, OpenAI cofounder and now CEO of rival xAI

Steven Molo — lead counsel for the plaintiff

Jared Birchall — manager of Musk’s family office

Shivon Zilis — former OpenAI board member who shares multiple children with Musk

Defendant

Sam Altman — defendant, CEO of OpenAI

William Savitt — lead counsel for the defendant

Greg Brockman — president of OpenAI as well as a cofounder

Ilya Sutskever — former chief scientist at OpenAI and a cofounder

Judge

Yvonne Gonzalez Rogers — aka YGR, trial judge

Here’s all the latest on the trial between Musk and Altman:

- Musk v. Altman: Much ado about nothing

- Musk v. Altman proved that AI is led by the wrong people

- Elon Musk loses his case against Sam Altman

- The jury has delivered a unanimous verdict.

- An observer has just been ejected from the court by the US marshals.

- C. Paul Wazzan is the expert called by Musk to determine damages.

- Closing time

- “I told you in my opening statement you wouldn’t hear very much from Microsoft, and you haven’t.”

- “I feel like I’m going to miss you all,” Savitt tells the jury.

- Savitt calls out the fact that Musk is abroad with President Trump today.

- Savitt says Musk has “selective amnesia.”

- Behold, the Elon Musk jackass trophy

- Savitt is talking about the statute of limitations.

- Here’s the jackass trophy that the jury didn’t get to see.

- We are back from our break. William Savitt is taking it home for the OpenAI defense.

- Tesla AI is evidence of Musk’s failure, Eddy says.

- “The documents tell the truth here,” Eddy says.

- Eddy suggests that Musk’s donated Teslas were, effectively, bribes.

- Eddy is, wisely, leaning hard on chronology in explaining their defense.

- Sarah Eddy is giving the closing argument for OpenAI.

- Molo is done presenting Musk’s closing argument.

- For all of the very irritating testimony about “the blip,” Molo hasn’t convincingly connected it to his case.

- Molo is now attempting to make a case against Microsoft.

- During our break, the jurors were out of the room, and the lawyers were beefing again.

- We are back on the millions and billions of OpenAI equity that employees have interest in.

- This is kind of choppy.

- Molo is now suggesting that the “crown jewels” of OpenAI are its IP.

- Musk’s early money meant “a great, great deal,” Molo says. Inarguable!

- Molo calls Shivon Zilis, mother of Musk’s children, “the most even-keeled, even-tempered witness at the trial.”

- Ah we are back at the museum store funding the museum.

- Perhaps Mr. Molo is unfamiliar with xAI.

- Fair enough, Mr. Molo.

- I see why Molo is leaning on the spoken testimony.

- Molo is now calling out Altman’s testimony.

- Molo is suggesting that Greg Brockman’s conduct makes him untrustworthy.

- Molo has begun his closing statement for Elon Musk, who is in China.

- YGR is now reading aloud the instructions to the jury.

- The monitor has left the courtroom.

- We are now having a fight about a new, large monitor that has appeared on the Musk table.

- “They didn’t give us page numbers, your honor.”

- Musk left the country with President Trump despite a judge’s orders.

- Microsoft and OpenAI rest.

- In the most boring expert testimony yet, Louis Dudney, a forensic accountant, testified about how those funds were spent.

- The shade we are getting in here is incredible.

- The cross is focusing on Coates’ pay.

- John Coates, OpenAI’s expert witness, is running a demolition derby on Musk’s expert witness.

- Museum gift shop metaphor found dead in a ditch.

- We’re listening to an expert witness, David Hemel, a law professor at NYU.

- During Elon Musk’s all-hands Q&A before departing OpenAI, Achiam said he felt Musk wanted to “race towards AGI.”

- Achiam is running circles around this lawyer on cross, without doing the annoying things other witnesses have done.

- Okay, it’s time for the cross of Achiam.

- “I think he was just upset that he had been challenged,” Achiam said. “This was not friendly.”

- During the all-hands, Musk expressed concerns about what would happen if DeepMind got to AGI first,

- “It was a bit like seeing Bigfoot through Plexiglass,” Achiam says of seeing Elon Musk in the office.

- Ilya Sutskever would get up on tables to give speeches in the early days of OpenAI.

- Achiam talked about the roles of Greg Brockman and Ilya Sutskever in OpenAI’s early days.

- Josh Achiam described what it was like to work at OpenAI in 2017.

- Achiam started at OpenAI as an intern in the summer of 2017, and became a full-time employee in December.

- Hi my name is Josh Achiam and welcome to “will we see the jackass trophy?”

- Fairly stupid choice by Musk’s lawyers to go after Microsoft’s major decision rights.

- Musk cross. I guess we are now going to have a fight about due diligence.

- “Our due diligence found no conditions related to Elon Musk,” Wetter says.

- Mike Wetter for Microsoft is taking the stand now.

- Scott, who is wearing sneakers and a black crew neck under his blazer, seems quite pleasant on cross.

- We are now getting cross-examination from Musk’s lawyer.

- Microsoft’s CTO Kevin Scott is on the stand.

- Microsoft doesn’t want any of this

- In his testimony, Musk said he never called anyone a jackass.

- Incredible evidence dispute this morning.

- Sam Altman was winning on the stand, but it might not be enough

- About 200 people work on safety at OpenAI.

- The chair of OpenAI’s safety and security committee said they’ve formally delayed its model releases.

- Irritatingly, no one has asked him why he’s called “Zico.”

- Microsoft establishes that OpenAI has other investors…

- We see the Musk “bait and switch” texts again.

- What if we had a drinking game for this trial?

- Musk says, “This is a bait and switch” in a October 2022 text chain.

- Molo is not doing especially impressive lawyering here.

- Molo asked Altman if he’d ever fire himself as CEO of the OpenAI for-profit.

- “The blip” again.

- Well, I do love a long inquiry into the linear nature of time.

- The difference between Musk and Altman on cross is really stark.

- Ronan Farrow’s article is brought up.

- This cross is spicy!

- Mr. Molo is going directly in at Altman: “Do you always tell the truth?”

- “If I knew how difficult and painful this was going to be, I never would have tried,” Altman said.

- We are now talking about Altman’s investments.

- “I had poured the last years of my life, and I was watching it be destroyed,” Altman said.

- “I was in this like fog of war, I didn’t know what was going on,” Altman says of what happened next.

- We are now onto “the Blip.”

- Sam Altman says Elon Musk’s mind games were damaging OpenAI

- OpenAI has raised “approximately $175 billion” in investment, Altman says.

- Altman seems to be getting into his testimony…

- Musk didn’t invest in the OpenAI for-profit because “he was no longer going to invest in any startups he did not control.”

- It looks like Sam Altman discussed the for-profit OpenAI with Elon Musk in detail.

- “Unlike a lot of other meetings with Mr. Musk, this was a good vibes meeting.”

- Now into Shivon Zilis. Altman says he retained her on the board to try to keep friendly relations with Musk.

- “I was annoyed” when Elon Musk tried to recruit talent from OpenAI, Altman said.

- Musk resigned because he had lost confidence in OpenAI “and did not believe we were going to be successful.”

- Musk suspended his quarterly donations in 2017. That left OpenAI in “a very tough position.”

- When it was time to get more capital, Musk was pushing OpenAI to be acquired by Tesla.

- A particularly “hair-raising moment” for Altman was a succession plan from Musk.

- Elon Musk has control issues, Altman says.

- Sam Altman takes the stand in trial against Elon Musk

- OpenAI has called Sam Altman as a witness.

- Taylor says the reason OpenAI Foundation has been able to do more work is the recapitalization.

- Bret Taylor is back on the stand.

- We’ve talked about how this case isn’t just for whatever happens in the court…

- Bret Taylor has been asked to slow down twice.

- “OpenAI is decidedly not profitable,” Taylor said.

- There’s “a lot of tension” between LLMs and what Taylor calls “content companies”…

- Plantiff rests. OpenAI calls its first witness, Bret Taylor of OpenAI Foundation.

- Ilya Sutskever says he was uncomfortable with Musk’s large ownership demand.

- Sutskever’s testimony is kind of a snooze so far.

- Satya Nadella is excused.

- A lot of people contact Satya Nadella about their boards, apparently!

- Microsoft’s lawyer is now back with Nadella.

- We are discovering that Satya Nadella knows very little about the OpenAI nonprofit.

- I can’t speak for the jury but I am very, very sick of hearing about “the blip.”

- “Not consistently candid” press release about Sam Altman’s firing is what Molo is citing as why Nadella should have known why Altman was fired.

- We are arguing now about risk and return.

- “I don’t want to be IBM and OpenAI to be Microsoft.”

- We are on cross, with Steven Molo for Musk.

- Satya Nadella seemed to forget he currently served on the board of a nonprofit.

- What is Copilot?

- During Altman’s ouster, Satya Nadella tried to reassure investors everything would not “crumble.”

- “Below them, above them, around them.”

- “We have each other’s phone numbers,” Nadella says of Musk.

- Nadella tells us that before the OpenAI partnership, Google was its biggest AI competitor.

- Satya Nadella is taking the stand, in a navy suit and a light blue tie with a white shirt.

- Jury is here. We are now finishing a video deposition from Friday about the OAI deal with MSFT.

- 👑

- We are having an arugment about evidence.

- Musk v. Altman week two recap.

- Microsoft was worried OpenAI would run off to Amazon and ‘shit-talk’ Azure

- Mira Murati’s deposition pulled back the curtain on Sam Altman’s ouster

- Oh this tack is more effective. Then OpenAI lawyer is going after Columbia…

- This cross of Schizer is pretty weak.

- Basically everything Schizer is saying is couched as a hypothetical…

- We are now hearing from David Schizer, one of Musk’s expert witnesses.

- We are still listening to McCauley.

- Tasha McCauley is testifying now in a video deposition.

- “Do you have any idea how you ended up in this courtroom?”

- I am having a hard time taking Rosie Campbell seriously.

- We are now hearing from Rosie Campbell, a former OpenAI employee.

- OpenAI’s board discussed merging with Anthropic during “the Blip.”

- Helen Toner is now talking about the board’s decision-making process.

- YGR is back on the bench.

- Musk’s biggest loyalist became his biggest liability

- We are going through the removal of Sam Altman from OpenAI in detail.

- Toner is relating how Sam Altman’s firing happened.

- Toner says she found out about ChatGPT by seeing screenshots on Twitter.

- Making AI models is “more like alchemy than chemistry,” Toner says.

- We are now looking at Helen Toner’s deposition.

- Microsoft would like to be excluded from this narrative.

- “It’s not in my neurons,” Zilis says, instead of “I don’t remember.”

- Sarah Eddy, an attorney representing OpenAI, got sarcastic with Zilis.

- Shivon Zilis brainstormed possible scenarios for AI.

- Musk offered Sam Altman a board seat at Tesla…

- Shivon’s emails aren’t great for Musk.

- The big sticking point for Brockman and Sutskever was control.

- Sam Altman loves exclamation marks.

- Mira Murati tells the court that she couldn’t trust Sam Altman’s words

- Zilis’ past emails mentioned in court proceedings include her referencing a potential “conversion to for-profit” for OpenAI.

- This is getting interesting.

- Zilis sent Altman a text message of support after his 2023 ouster.

- Zilis said another concern she had about Altman related to OpenAI’s potential deal with Helion.

- Also in the spirit of clarifications this morning…

- Zilis said she had major concerns about OpenAI’s board not being notified in advance of ChatGPT’s release.

- Zilis said that the fallout from Altman’s 2023 ouster changed her view of OpenAI’s Microsoft deal.

- When asked how much Musk works per week, Zilis laughed.

- Musk’s team has called Shivon Zilis.

- Murati says problems with Altman persisted after he returned to the company.

- “OpenAI was at catastrophic risk of falling apart” when Altman was fired, Murati says.

- We are seeing video testimony from Mira Murati’s deposition.

- We are clearing up “a few inaccuracies from yesterday.”

- We are taking care of some matters before the jury comes in.

- Microsoft and OpenAI’s definition of AGI was just revealed.

- The jurors look as bored as I feel.

- Brockman steps down. We are looking at the video deposition of Robert Wu.

- Brockman is telling the truth about considering removing Musk from the board.

- Every time Molo makes a summary of Brockman’s testimony, Brockman objects to it.

- We are now fighting about “Either go do something on your own or continue with OpenAI as a non-profit.”

- One other thing I don’t understand…

- Molo is trying to reiterate what he did more effectively yesterday.

- “You had no problems answering your lawyers’ questions,” Molo is practically yelling.

- Molo asks Brockman if Musk was “being mean” to him.

- We are back to quibbling.

- We are now discussing the OpenAI Foundation layoffs.

- Microsoft is done, bless them.

- Microsoft is now getting to talk to Brockman.

- The blip.

- We are now discussing Shivon Zilis.

- We are now going through the assorted releases of GPT models.

- When Musk resigned, he gave a speech to OpenAI’s employees that might have been demoralizing…

- One observation from Brockman and Sutskever’s emails.

- We are now recontextualizing more entries from Brockman.

- There were discussions between Brockman, Altman, and Sutskever about removing Musk from the board.

- We are back from a break.

- “I thought he was going to hit me,” Brockman says of Musk.

- Elon Musk doesn’t love anything he can’t control.

- Sam Altman discussed an equal equity split…

- We are now discussing Brockman’s journal.

- Brockman talks Dota 2.

- Elon Musk tried to get Bill Gates to donate to OpenAI.

- First sidebar of the trial.

- OpenAI had layoffs at Musk’s insistence.

- Greg Brockman tells the court that while at OpenAI, he and three others worked at Tesla.

- YGR is on the bench.

- Google’s AI architect lived rent-free in Elon Musk’s head

- OpenAI’s president does ‘all the things,’ except answer a question

- Jury is sent out for the day.

- We are hearing about the early days of OpenAI.

- Early worries about Musk came from Ilya Sutskever.

- Brockman is describing his bromance with Altman.

- “I do all the things.”

- Brockman says we are 80 percent of the way to AGI.

- Open AI’s direct examination of Brockman is pretty sedate so far… aside from Tesla.

- OpenAI’s lawyers are now getting their shot at Brockman.

- For real, I think nerds should not testify in court.

- We are now looking at Brockman’s other financial dealings.

- We finished with the Microsoft investment pretty quickly.

- Altman didn’t return after we took our break.

- We are presently having a fight about purple boxes.

- We have been doing the same question for perhaps the last five minutes.

- “Financially what will take me to $1B?”

- “His story will correctly be that we weren’t honest with him in the end about still wanting to do the for profit just without him.”

- Greg Brockman’s journal: “it’d be wrong to steal the non-profit from him.”

- Brockman is not doing himself any favors.

- Brockman’s cross-examination isn’t as testy as Musk’s, but he’s also pushing back on a lot of questions.

- Is sending stuff to Sam Teller and Shivon Zilis the same as sending it to Musk?

- Brockman and Altman’s alliance?

- “Is Demis Hassabis evil?”

- Greg Brockman is talking about the earliest days of OpenAI.

- Greg Brockman and Sam Altman have just entered the courtroom.

- We’re done with Russell.

- “The age of abundance for Elon.”

- Oh now we have some meat.

- Elon Musk’s expert doesn’t follow him on X.

- I am befuddled by this expert testimony.

- We are dealing with the cross now.

- Sure is lucky that mentions of Grok’s safety issues got limited.

- Individual vs. systemic risk.

- We now have a very boring expert witness testifying to AI risks.

- Stuart Russell is here to tell us about AI.

- “I need that today. That’s good. I like that.”

- Greg Brockman won’t be asked about Musk’s threat.

- Elon Musk tried to settle before the trial — and got threatening.

- Musk v. Altman is getting a live audio stream next week.

- OpenAI Tesla receipts and other Musk v. Altman documents.

- All the evidence revealed so far in Musk v. Altman

- Here’s how Gabe Newell and Hideo Kojima ended up in the Musk v. Altman evidence.

- The craziest part of Musk v. Altman happened while the jury was out of the room

- Jury is being dismissed early so YGR can deal with an objection to Birchall’s testimony.

- Birchall is actually very funny outside of court? Good for him.

- We are now hearing about the pause in quarterly donations.

- We’re back.

- Second break of the day.

- Birchall cross.

- Elon Musk confirms xAI used OpenAI’s models to train Grok

- Birchall has just been asked about the four Teslas.

- Birchall testifies about Musk’s contributions to OpenAI.

- A woman in the gallery has lowered a sleep mask over her eyes and is attempting to sleep.

- Musk steps down. He may be recalled.

- We are on re-cross. Musk is getting testy again.

- The Microsoft investment comes back up.

- And we’re back.

- We’re in break — and I just checked out something interesting.

- Elon Musk’s robot army definitely will not kill you.

- Musk insists he wasn’t kneecapping OpenAI.

- Musk seems notably more subdued today.

- “At least change the name,” Musk says he told Altman.

- Elon Musk v. Capitalism.

- An “ongoing conversation” around open source.

- We’re still talking about whether Musk read the term sheet.

- The jurors have been seated.

- Musk has just entered the courtroom.

- “Issues of extinction are excluded.”

- Good morning!

- Elon Musk’s worst enemy in court is Elon Musk

- Freedom!

- Unfortunately we will not be talking about safety details of any specific product.

- The jury is leaving for the day. “I suspect it’s a nice day out there,” YGR says.

- MechaHitler might be a bad look for the AI safety defender.

- Musk’s broader AI safety commitment (or lack thereof) comes up.

- This is so testy.

- Did Musk even read the OpenAI term sheet?

- Musk asked Shivon Zilis to stay “close and friendly” with OpenAI to keep info flowing.

- Musk says xAI probably won’t be the first to get to AGI.

- We’re back from a break, talking about SpaceX and xAI.

- Don’t worry about Tesla’s robot army!

- “You mostly do unfair questions.”

- “It’s a free country.”

- “Will you answer my question?”

- Musk’s desire for control comes up again.

- “This is a hypothetical.”

- Did Musk initially envision OpenAI as a corporation?

- Musk is being combative on cross already.

- “I did say that I would commit up to a billion dollars, yes.”

- Is Tesla really not working on AGI?

- Musk is returning to the stand.

- At times, being a judge is much like being a kindergarten teacher.

- We’re on a break.

- “I mean, all due respect to Microsoft, do you really want Microsoft controlling digital superintelligence?”

- “What’s going on here this is a bait and switch.”

- A Musk-Altman spat about Microsoft.

- Musk really cannot help himself.

- “Capped profit” wasn’t an issue, even when Microsoft got involved.

- “Tesla is not pursuing AGI.”

- Musk is more on his game today.

- “After I received these reassurances that OpenAI would continue to be a nonprofit I continued to donate over $10 million.”

- “I actually was a fool who provided free funding for them to create a startup.”

- More discussion of who would own OpenAI.

- “I don’t lose my temper,” says Elon Musk.

- “2017 was a hard year, and we’ve made mistakes.”

- “I formed many for-profit tech companies, and could have done so with OAI,”

- “Crystal clear focus.”

- Sam Altman has just entered the room, right ahead of the jury.

- A member of the public just got dressed down by YGR about taking photos.

- Musk v. Altman et al. is back in session.

- In naming OpenAI, Elon Musk worried anything related to the Turing Test could mean bad PR.

- Elon Musk appeared more petty than prepared

- That’s a wrap!

- YGR scolds OpenAI for taking inconsistent positions on the origin of its name.

- Elon Musk tells the jury that all he wants to do is save humanity

- Arguments over ownership.

- Apparently OpenAI could have had an ICO.

- “I was not averse to a small for-profit,” Musk says.

- We’re reading emails between Musk and Jensen Huang.

- Musk says nonprofit was non-negotiable for OpenAI.

- We’re at the founding of OpenAI.

- Musk says he would have created something like OpenAI on his own.

- Musk recalls meeting Sam Altman.

- Sam Altman left during a break, but Elon Musk’s lawyer didn’t notice.

- “Here we are in 2026 and AI is scary smart.”

- “I have extreme concerns about AI,” says Musk.

- AI will be as smart as “any human as soon as next year.”

- Musk claims he has time for SpaceX, Tesla, Neuralink, and the Boring Company because he works a lot.

- Musk is telling the jury he (co)founded Tesla.

- Neuralink’s long-term goal is… AI?

- “There need to be things that people are excited about that make life worth living … Being out there among the stars can excite everyone.”

- A little Musk biography.

- Elon Musk, looking funereal in a black suit with a black tie, says “it’s not okay to steal a charity.”

- Elon Musk takes the stand in high-profile trial against OpenAI

- We are back from a break.

- Elon Musk will be the first witness in Musk v. Altman.

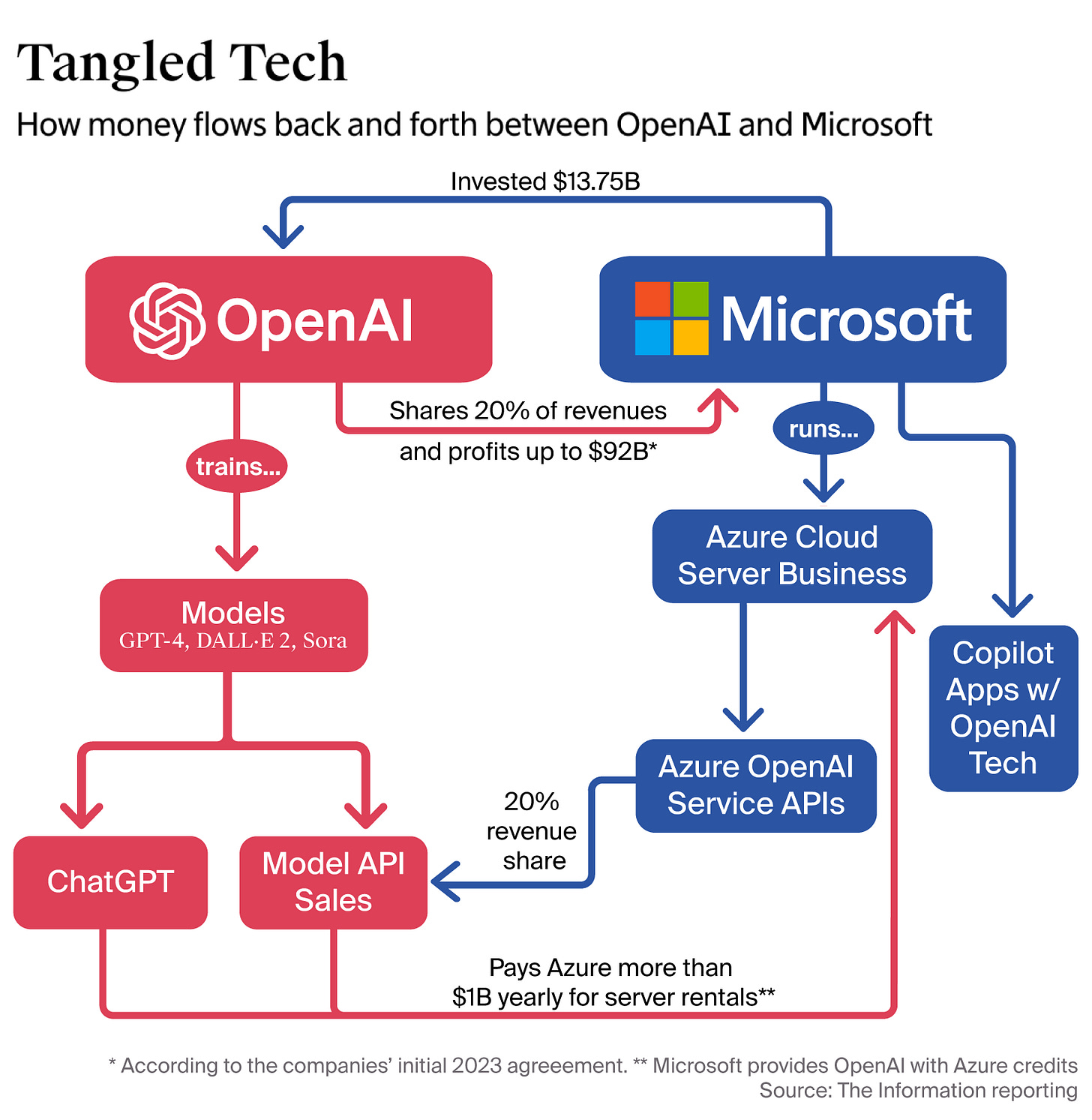

- “Microsoft unlocked with OpenAI a virtuous cycle.”

- Microsoft enters the chat.

- “We are here because Mr. Musk didn’t get his way at OpenAI.”

- “[Musk] demanded control, he demanded the ability to make all the decisions without regard to the other founders.”

- OpenAI lawyers argue that Elon was right in the middle of discussions about a for-profit pivot.

- “Musk was furious that OpenAI succeeded.”

- OpenAI: Musk’s lawsuit is a “pageant of hypocrisy.”

- Sam Altman’s “related party conflicted transactions” are how he made money on OpenAI, Molo says.

- Technical difficulties.

- OpenAI is like a museum store that has looted the Picassos and pocketed the profits.

- AGI might be out of fashion in the AI world, but it will be at the center of this trial.

- “The defendants in this case stole a charity.”

- Musk and Altman go to court

- Good morning from the Musk v. Altman line outside the courtroom.

- Jury selection in Musk v. Altman: ‘People don’t like him’

- We have a jury.

- Elon Musk’s lawyer tried to get some jurors thrown out for disliking Musk.

- Apparently things are exciting outside.

- We have gone through the first 20 potential jurors.

- Voir dire has begun.

- The Elon Musk vs. OpenAI trial starts today.

- Elon Musk drops fraud claims against OpenAI and Sam Altman before trial.

- Musk vs. Altman is here, and it’s going to get messy

- Elon Musk is about to be a very busy boy!

- ‘Sideshow’ concerns and billionaire dreams: What I learned from Elon Musk’s lawsuit against OpenAI

- Elon Musk’s xAI is suing OpenAI and Apple

- Inside Elon Musk’s messy breakup with OpenAI

- Elon Musk is suing OpenAI and Sam Altman again

-

Anthropic’s Code with Claude showed off coding’s future—whether you like it or not MIT Technology Review May 21, 2026 02:30 PM 5 min read As tools like Claude Code get better, more and more developers are happy to hand off coding tasks to them. The way software gets built has changed for good.

The vibes were strong at Code with Claude, Anthropic’s two-day event for software developers in London that kicked off on May 19, the same day as Google’s I/O in Palo Alto. (A coincidence, not a flex, Anthropic staffers assured me.)

“Who here has shipped a pull request in the last week that was completely written by Claude?” Jeremy Hadfield, an engineer at Anthropic, asked from the main stage. Almost half the people in the packed room—many sitting with laptops on their knees, coding or prompting as they watched the talks—raised their hands.

Pull requests are fixes or updates to existing software that are submitted for review before they go live. They are the bread and butter of software development, the chunks of code that most professional developers spend their lives writing—or did until now.

“Who here has shipped a pull request that was completely written by Claude where they did not read the code at all?” Hadfield asked next. Nervous laughter. Most of the hands stayed up.

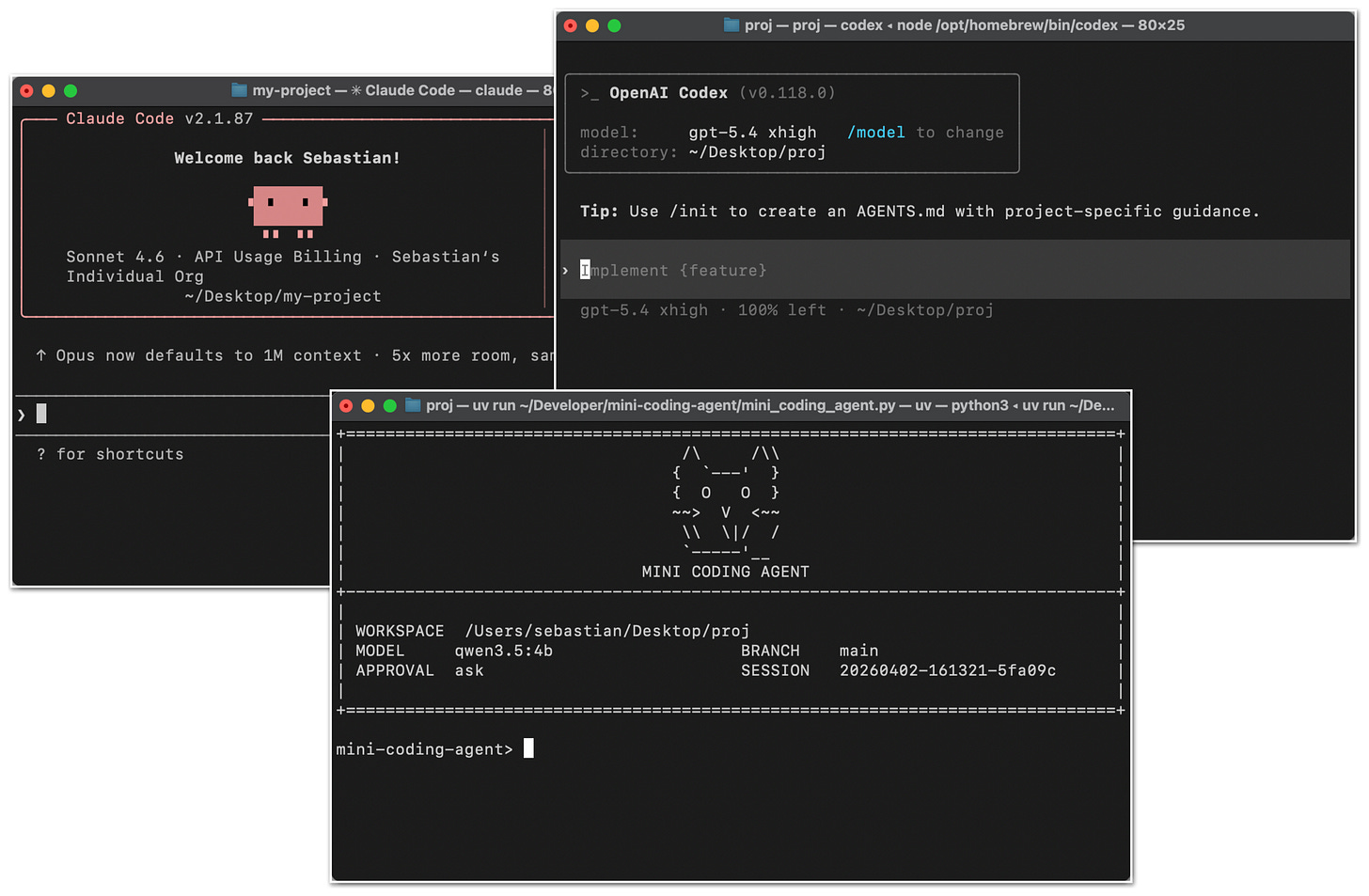

It’s not news that LLM-powered tools like Anthropic’s Claude Code and OpenAI’s Codex have upended the way software gets made. Top tech companies now like to boast of how little code their developers write by hand. (“Most software at Anthropic is now written by Claude,” Hadfield said. “Claude has written most of the code in Claude Code.”) OpenAI, Google, and Microsoft make similar claims. Many others wish they could.

Even so, it is striking how normal this new paradigm already seems, and how fast it has set in. This was the second year that Anthropic has put on developer events, which also run in San Francisco and Tokyo. This time last year, the company had just released Claude 4. It could code, kind of. But with Anthropic’s latest string of updates—especially Claude 4.6 and then 4.7, released in February and April—Claude Code is a tool that more and more developers seem happy to hand their work off to.

Let Claude cook. ANTHROPIC (GRAPHIC) / WILL DOUGLAS HEAVEN (PHOTO)Anthropic says its goal is to push automation as far as it will go. Instead of using AI to generate code and then having humans clean it up and fix the mistakes, it wants Claude to check and correct its own work. “The default isn’t ‘I’m going to prompt Claude’—the default is now ‘I’m going to have Claude prompt itself,’” Boris Cherny, who heads Claude Code, said in the opening keynote.

If all goes well, human developers shouldn’t even see the error messages when something doesn’t work. That will all be handled by Claude, which will test and tweak, test and tweak, until everything runs as it should. As Ravi Trivedi, an engineer at Anthropic, put it in another talk: “The key principle is getting out of Claude’s way. We like to say: ‘Let it cook.’”

Trivedi presented a new feature in Claude Code, announced two weeks ago, which Anthropic calls dreaming. Claude Code agents write notes to themselves, recording and saving useful information about specific tasks. When another coding agent later starts to work on the same code, it can use the notes to get up to speed faster and learn from any errors that previous agents may have made.

Dreaming is a system that Claude Code uses to read through all these notes and consolidate the information they contain, spotting patterns and common issues across different tasks. In theory, dreaming should help Claude Code learn about a particular code base and get better and better at working on it.

Success stories

Code with Claude is an event aimed at developers. As well as product showcases and hands-on workshops from Anthropic, there were how-tos from a range of companies that have reshaped their software development teams around Claude Code, including Spotify and Delivery Hero as well as Lovable, Base44, and Monday.com—three startups vibe-coding apps that help people vibe-code apps.

There were no signs of unease at Code with Claude. Everybody I met wanted in.

And yet outside the conference there have been a number of reports that many coders are starting to question this bright new future. Some gripe in online forums like Reddit and Hacker News that AI coding tools are being pushed by managers chasing productivity gains, when in practice the technology makes software development harder because of all the extra code developers now have to review. “The only people I’ve heard saying that generated code is fine are those who don’t read it,” a user called pron posted on Hacker News last week.

Others claim that their coding abilities have fallen off as they hand more tasks to AI. And researchers have warned that AI tools can produce unsafe code that will make software more vulnerable to attacks.

I sat down with Claude engineering lead Katelyn Lesse and Claude product lead Angela Jiang and asked them what they made of the concerns that a sudden flood of code generated (and shipped) without proper human oversight was kicking serious security and maintenance problems down the road.

“All of the old software development best practices still apply. They’ve applied this entire time,” said Lesse. “I think there are a lot of people and teams that may have lost sight of them in this moment.”

And yet as Anthropic and others push for greater automation and tools like Claude Code improve, the temptation increases to offload more and more tasks, including oversight. Lesse told me that some of the technical managers at Anthropic are exhausted by keeping up with all the code their teams now produce. “Part of things happening so much more quickly is just managing your time,” she said.

“I think that right now Claude is probably as good as a midlevel engineer at writing code,” she added. You still need expert engineers to design a system and troubleshoot harder problems, she said. “But over time we want Claude to get better and better at all different types of engineering.”

Jiang agreed: “I think the absolute end state we’re trying to get to is Claude basically being able to build itself.”

-

In desperate times, graduates find hope in humiliating tech CEOs The Verge AI May 21, 2026 04:00 PM 1 min read ‘They deserve everything they’re getting.’ (Boos.)

University graduates are booing and heckling corporate executives who praise AI during their commencement ceremonies, and the only people who seem to be genuinely surprised by this are the executives themselves.

In a procession of viral videos, 2026 commencement speakers like former Google CEO Eric Schmidt face loud and sustained jeers from students after praising AI and describing the technology as both inevitable and mandatory. The videos have clearly struck a chord among young people entering a bleak job market in an increasingly unstable world.

"They deserve everything they're getting," Penny Oliver, who recently graduated with a poli …

- I Cloned Myself With Gemini’s AI Avatar Tool. The Result Was Unnervingly Me Wired AI May 21, 2026 03:48 PM I used the Gemini app to generate lifelike videos featuring a digital clone of myself. Google sees this as the future of creation. I’m still creeped out.

- Spotify adds AI-powered Q&A and briefing generation features to podcasts TechCrunch AI May 21, 2026 03:27 PM Spotify will let you generate daily or weekly briefs based on your prompts

-

LWiAI Podcast #245 - TML-Interaction, Claude For Legal, Sam Altman on Stand Last Week in AI May 20, 2026 07:45 AM 2 min read OpenAI launches new voice intelligence features in its API, Thinking Machines drops a new, highly responsive model designed for humanlike interactions in real time, and more!

Our 245th episode with a summary and discussion of last week’s big AI news!

Recorded on 05/13/2026

Hosted by Andrey Kurenkov and Jeremie Harris

Feel free to email us your questions and feedback at andreyvkurenkov@gmail.com and/or hello@gladstone.ai

In this episode:

OpenAI released new voice intelligence API features including GPT Realtime 2 (GPT-5-powered) plus realtime translation and Whisper transcription, emphasizing the latency–reasoning tradeoff, larger context, and new guardrails amid fraud risks.

Thinking Machines previewed a low-latency, full‑duplex conversational system with a two-model architecture and custom inference stack, reporting strong interactivity benchmark results but without public access or third‑party validation yet.

Anthropic pushed further into vertical products with Claude for Legal and deeper AWS availability, while ongoing ecosystem tension grows as platform model providers compete with application-layer companies.

Safety, policy, and research updates included OpenAI’s self-harm trusted contact feature, Anthropic work on reducing agent misalignment by training ethical “why” reasoning, OpenAI’s investigation of accidental chain-of-thought grading in RL, and Meta horizon eval updates showing benchmarking limits for long task horizons.

Timestamps:

(00:00:10) Intro / Banter

(00:01:35) Response to listener comments

(00:03:27) Sponsor Break

Tools & Apps

(00:06:27) OpenAI launches new voice intelligence features in its API | TechCrunch

(00:27:49) Claude For Legal Launches, May Reshape the Legal Tech World – Artificial Lawyer

(00:40:27) Threads tests a Meta AI integration that works similarly to Grok | TechCrunch

(00:43:08) Google brings agentic AI and vibe-coded widgets to Android | TechCrunch

(00:45:33) Google updates AI search to include quotes from Reddit and other sources | TechCrunch

Applications & Business

(00:47:38) Sam Altman was winning on the stand, but it might not be enough | The Verge

(00:58:40) AWS expands Anthropic partnership with Claude Platform launch

(01:06:43) DeepMind Spinout Isomorphic Labs Raises $2.1 Billion to Design Drugs With AI - Bloomberg

Projects & Open Source

(01:09:04) Petri: Anthropic Hands Its Alignment Toolbox to Meridian Labs with 3.0 Update

(01:12:25) Daybreak’: OpenAI’s Answer to Anthropic’s Project Glasswing Has Arrived

Policy & Safety

(01:14:04) Teaching Claude why

(01:21:45) Import AI 455: Automating AI Research

(01:28:31) ChatGPT’s New Safety Feature Could Alert ‘Trusted Contact’ to Risk of Self-Harm - CNET

(01:30:09) Investigating the consequences of accidentally grading CoT during RL

(01:34:46) Natural Language Autoencoders criticism

(01:39:15) Review of the “Risks from automated R&D” section in the Anthropic Risk Report (February 2026)

Synthetic Media & Art

Research & Advancements

- Spotify takes on Google’s NotebookLM with its new app TechCrunch AI May 21, 2026 03:27 PM Spotify is releasing the new desktop app as a research preview in more than 20 markets.

-

This AI guitar pedal let me roll my own effects The Verge AI May 21, 2026 01:00 PM 1 min read That new sound you’ve been looking for?

You can buy physical plates to pair with your AI effects. | Photo: Terrence O’Brien / The Verge I'm not sure anyone was really asking for an AI guitar pedal. But it was inevitable that someone would build one. One of the first to take the plunge is Polyend, a well-respected music gear maker with a reputation for building niche, idiosyncratic devices. The company has built grooveboxes around old-school trackers and a multi-effect pedal that you can step sequence. So there was at least some hope that if anyone could do an AI effect pedal right, it would be Polyend.

Polyend's Endless is a $299 programmable guitar pedal running an ARM processor. It's paired with Playground, a number of interconnected AI agents that turn any text prompt i …

- Spotify launches an ElevenLabs-powered audiobook creation tool TechCrunch AI May 21, 2026 03:27 PM The AI-powered audiobook generation won't bind authors to an exclusive contract, meaning they are free to publish their generated audiobooks anywhere.

-

Google just redesigned the search box for the first time in 25 years — here’s why it matters more than you think. VentureBeat AI May 19, 2026 05:45 PM 10 min read

For a quarter century, the Google search box has been one of the most recognizable interfaces in computing: a thin white rectangle, a blinking cursor, a few typed words, and a list of blue links. On Tuesday, Google will formally retire that paradigm.

At its annual I/O developer conference, Google announced a sweeping redesign of the search box itself — the literal text field where billions of queries begin every day — transforming it from a simple keyword input into a dynamic, AI-driven conversation starter that can accept text, images, PDFs, videos, and even open Chrome tabs as inputs. The company is also merging its AI Overviews and AI Mode features into a single, seamless search flow, eliminating the friction that previously forced users to choose between a traditional results page and an AI-forward experience.

Liz Reid, Google's vice president and head of Search, called it "the biggest upgrade to our iconic search box since its debut over 25 years ago" during a press briefing on Monday.

The announcement arrived alongside a blizzard of other news — new Gemini models, a personal AI agent called Spark, an intelligent shopping cart, a reimagined developer platform — but the search box redesign may prove to be the most consequential. It is the clearest signal yet that Google views the future of its flagship product not as a place where users type fragmented keywords, but as an interface where they hold open-ended, multimodal conversations with an AI system backed by the entire web.

The new search box expands, accepts files, and coaches you on what to ask

The changes show a fundamental shift in how Google expects people to interact with the product that generates the vast majority of Alphabet's revenue.

The box itself now dynamically expands to accommodate longer, more conversational queries. Where the old interface subtly encouraged brevity — a narrow field suited to two- or three-word keyword strings — the new design invites users to fully articulate complex questions in granular detail. It also now supports multimodal inputs directly. Users can upload images, PDFs, files, and videos, or drag in content from Chrome tabs, right from the main search interface. Previously, some of these capabilities existed in AI Mode, but reaching them required extra steps. Now they sit at the primary entry point.

Google is also deploying what it describes as an AI-powered query suggestion system that "goes beyond autocomplete." Rather than simply predicting the next word a user might type based on popular searches, the system helps users formulate complex, nuanced queries — essentially coaching them toward the kind of detailed questions that AI Mode handles best.

The new search box is starting to roll out immediately in all countries and languages where AI Mode is available.

Google is merging AI overviews and AI mode into one seamless experience

Perhaps more significant than the box itself is the architectural change happening behind it. Google is unifying AI Overviews — the AI-generated summary panels that appear atop traditional search results — with AI Mode, the more immersive conversational search experience the company launched at I/O one year ago.

Starting Tuesday, this merged experience will be live across mobile and desktop worldwide. A user can type a question, receive an AI Overview alongside traditional results, and then continue directly into a back-and-forth AI Mode conversation to ask follow-up questions — all without navigating to a separate interface.

Reid explained the logic during the press briefing: the new AI search box is "an upgrade of our traditional search box, and so the results take you directly to main search rather than AI mode." She noted that while some power users actively sought out AI Mode, "for most users, they don't actually want to have to think about, do they want more of a traditional page or an AI-forward search experience."

The goal, she said, was to ensure that "for most users, they don't have to think about where to go, they can just go to the search box they're familiar with, and it feels like they get the best experience afterwards."

One billion users and doubling queries reveal how fast search behavior is shifting

Google's decision to redesign the foundational interface of its most important product did not happen in a vacuum. The company shared a set of usage statistics during the briefing that reveal just how rapidly user behavior is already changing.

AI Mode, which launched in the United States at I/O 2025, has surpassed one billion monthly users in its first year. AI Mode queries have been doubling every quarter since launch. AI Overviews, the lighter-weight AI summaries, now reach more than 2.5 billion monthly users. And overall search query volume hit an all-time high last quarter — a data point the company had previously disclosed on its earnings call.

Sundar Pichai, Google's CEO, framed these figures as evidence that AI features are additive, not cannibalistic, to search usage. "When people use our AI-powered features in search, they use search more," he said. He added that he loves "how search has become less about individual queries and feels more like an ongoing conversation, giving users deeper insights and connecting you with the vastness of the web."

Reid reinforced the point: "It's not just that people are searching more, it's that they're searching differently. They're fully expressing their questions in granular detail, asking those follow-up questions and searching across modalities."

Gemini 3.5 Flash gives Google's AI search the speed it needs to work at scale

Under the hood, the new search experience runs on Gemini 3.5 Flash, Google's newest AI model, which the company also introduced at I/O. Google upgraded AI Mode's underlying model to 3.5 Flash to deliver what Reid described as "an even more powerful AI search experience."

Gemini 3.5 Flash is the workhorse of this year's announcements. Google claims it outperforms its previous frontier model, Gemini 3.1 Pro, on nearly all benchmarks while running four times faster in output tokens per second than comparable frontier models. Pichai described it as being "in a league of its own in the top right quadrant" of the Artificial Analysis index, which plots intelligence against speed — meaning it delivers near-frontier quality at dramatically lower latency.

That speed matters enormously for search. A conversational AI search experience that feels sluggish would be dead on arrival for a product that serves billions of queries daily. By coupling the redesigned interface with a model optimized for both quality and throughput, Google is attempting to make AI-powered search feel as instantaneous as the old keyword experience — while being dramatically more capable.

Search can now build interactive visuals and custom mini apps on the fly

The redesigned search box is also the gateway to a set of new capabilities that push search far beyond text-based answers. Google announced what it calls "generative UI" — the ability for search to dynamically build custom widgets, interactive visualizations, and even mini applications in real time, tailored to a user's specific question.

Reid offered a concrete example during the briefing: a user could ask "How do black holes affect space time?" and receive an interactive visual in an AI Overview that brings the concept to life. Follow-up questions would trigger the system to dynamically generate entirely new visuals in real time. This is possible, she explained, because of "a novel real-time code generation system we built in partnership with the Google DeepMind team" that runs on Gemini 3.5 Flash. Generative UI capabilities will roll out to everyone this summer, free of charge.

But Google is going further still. For ongoing tasks — planning a wedding, organizing a move, tracking a fitness routine — users will be able to build what the company describes as customizable, stateful experiences within search, powered by its Antigravity development platform. These require no coding expertise. Users simply describe what they want in natural language, and search builds it. Those experiences will be available in coming months, starting with Google AI Pro and Ultra subscribers in the United States.

AI agents that monitor the web around the clock are coming to search results

The redesign also opens the door to what Google calls "information agents" — AI agents that users can configure directly within search to monitor the web 24/7 for specific conditions and deliver synthesized updates when those conditions are met.

A user could, for example, set up an agent to track market movements in a particular sector with specific parameters. The agent would create a monitoring plan, tap into real-time finance data, and proactively notify the user when conditions are met — complete with links and context for further research. Other use cases include apartment hunting, tracking sneaker drops, or monitoring any topic a user cares about. Information agents will launch first for Google AI Pro and Ultra subscribers this summer.

These agents sit within a much larger strategic pivot that Google articulated throughout the briefing: the company is going all-in on AI systems that don't just answer questions but proactively take actions on users' behalf. Beyond search, Google introduced Gemini Spark, a 24/7 personal AI agent that runs on dedicated virtual machines in Google Cloud. It unveiled the Universal Cart, an intelligent cross-merchant shopping cart. It announced the Agent Payments Protocol for agents to make secure purchases. And it expanded its Antigravity developer platform into a full ecosystem for building autonomous AI agents.

Publishers, advertisers, and SEO professionals face a new reality

The redesign raises profound questions for the sprawling ecosystem — publishers, advertisers, SEO professionals — that has been built around the old model of keyword search and blue links.

If users increasingly express their needs as full, conversational sentences rather than fragmented keywords, the entire discipline of search engine optimization will need to evolve. Keyword-density strategies become less relevant when the AI is parsing natural language intent rather than matching strings. Content that answers deep, nuanced questions in authoritative ways becomes more valuable; content engineered to rank for two-word keyword fragments becomes less so.

For publishers, the stakes are existential. AI Overviews already synthesize information from across the web and present it directly in search results, reducing the need for users to click through to source material. The new seamless AI Mode integration deepens that dynamic: users can now get an AI-generated answer and ask multiple follow-up questions without ever leaving the search page. Google has consistently maintained that its AI features drive more traffic to publishers, but the redesign puts that claim under renewed scrutiny as the search results page becomes more self-contained.

For advertisers — who fund the vast majority of Google's revenue — the shift from keywords to conversations changes the calculus of ad targeting. Conversational queries contain richer intent signals, which could make ad targeting more precise and valuable. But they also create new ambiguities: when a user is in the middle of a multi-turn conversation with AI Mode, where does an ad naturally fit? Google did not detail changes to its advertising model during the briefing, but the structural shift in the interface will inevitably reshape how ads are surfaced and measured.

The search box was always more than a product — it was a habit for billions of people

There is a reason Google chose to redesign the search box rather than simply adding new features behind it. The search box is not just a product element at this point; it is a cultural artifact — one of the few pieces of digital infrastructure used by essentially the entire internet-connected world. Changing it sends an unmistakable message about where the company believes computing is headed.

For 25 years, the search box trained billions of people to think in keywords — to compress their curiosity into the shortest possible string of words. The new box invites them to do the opposite: to think out loud, to upload what they're looking at, to ask follow-up questions, to let an AI system handle the compression.

Pichai tied the company's broader ambitions to a striking statistic: Google's surfaces now process over 3.2 quadrillion tokens per month, up seven-fold from a year ago. The company expects capital expenditures of approximately $180 to $190 billion in 2026 — roughly six times the $31 billion it spent four years ago — largely to support the infrastructure required for this AI transformation. When asked about the future of traditional search, he was direct. "Search is the most used AI product in the world," he said.

The blinking cursor in Google's search box still invites you to type. But after 25 years of teaching the world to speak in keywords, Google is now asking it to speak in sentences — and betting roughly $190 billion that it will.

-

Spotify is launching AI-generated remixes The Verge AI May 21, 2026 11:54 AM 1 min read UMG is first to strike a licensing deal.

Spotify and Universal Music Group (UMG) just announced a licensing deal that will allow users to prompt the creation of AI-generated remixes and covers for streaming songs. The tool will be a paid add-on for Premium subscribers. Artists will be able to opt out of the program, but those who do participate will collect royalties on these AI remixes.

In October of last year, Spotify announced that it was working with UMG, as well as other major labels, Sony Music Group, Warner Music Group, Merlin, and Believe, to create "responsible AI products." At the time, it was unclear exactly what that meant. But this appears to be the first product of t …

-

Two AI-based science assistants succeed with drug-retargeting tasks Ars Technica AI May 19, 2026 06:55 PM 1 min read Both tools generate hypotheses; one goes on to analyze some of the data.

On Tuesday, Nature released two papers describing AI systems intended to help scientists develop and test hypotheses. One, Google's Co-Scientist, is designed as what they term "scientist in the loop," meaning researchers are regularly applying their judgments to direct the system. The second, from a nonprofit called FutureHouse, goes a step beyond and has trained a system that can evaluate biological data coming from some specific classes of experiments.

While Google says its system will also work for physics, both groups exclusively present biological data, and largely straightforward hypotheses—this drug will work for that. So, this is not an attempt to replace either scientists or the scientific process. Instead, it's meant to help with what current AIs are best at: chewing through massive amounts of information that humans would struggle to come to grips with.

What's this good for?

There are some distinctions between the two systems, but both are what is termed agentic; they operate in the background by calling out to separate tools. (Microsoft has taken a similar approach with its science assistant as well; OpenAI seems to be an exception in that it simply tuned an LLM for biology.) And, while there are differences between them that we'll highlight, they are both focused on the same general issue: the utter profusion of scientific information.

- SpaceX Listed Grok’s ‘Spicy’ Mode as a Risk in Its IPO Filing Wired AI May 21, 2026 12:43 AM The rocket company has set aside more than $500 million for potential litigation losses, in part to account for complaints alleging that Grok created sexualized images.

- The Path, founded by Tony Robbins and Calm alums, hopes to offer safer AI therapy TechCrunch AI May 21, 2026 02:00 PM The Path says its AI model has scored 95 on the mental health safety AI benchmark, Vera-MH. This compares to a top score of 65 for the consumer bots.

-

Spotify Studio’s AI agent creates a daily podcast just for you The Verge AI May 21, 2026 11:47 AM 1 min read Music, podcasts, and a podcast that’s all about you.

Studio by Spotify Labs is a new standalone AI app that generates a daily briefing, podcasts, and playlists on your PC using chatbot prompts. The AI-generated content draws from your Spotify listening history, as well as info from apps you connect to it, like your email inbox, calendar, and notes. Spotify says its AI can also "take action on your behalf," such as "researching topics, using a web browser, organizing information, and helping complete tasks."

Any content you generate in Studio, like a daily briefing podcast, can be saved to your Spotify library. It will be launching "in the coming weeks" as a research preview for users 18 and …

- SpaceX Is Spending $2.8 Billion to Buy Gas Turbines for Its AI Data Centers Wired AI May 20, 2026 11:30 PM The investment comes as Elon Musk’s AI unit faces complaints about the carbon-emitting units and looks to become a big player in cloud computing.

-

Hark expects to release its first multimodal models this summer, which it says will power a personal AI platform that works with existing products and services. The company expects to follow that with hardware devices built specifically for those systems.Hark raises $700M Series A for its secretive ‘universal’ AI interface TechCrunch AI May 21, 2026 02:00 PM 1 min read Hark expects to release its first multimodal models this summer, which it says will power a personal AI platform that works with existing products and services. The company expects to follow that with

-